.

Also asked, what is the best format for spark storage?

Key takeaways. The default file format for Spark is Parquet, but as we discussed above, there are use cases where other formats are better suited, including: SequenceFiles: Binary key/value pair that is a good choice for blob storage when the overhead of rich schema support is not required.

Beside above, how does spark read a csv file?

- Do it in a programmatic way. val df = spark. read . format("csv") . option("header", "true") //first line in file has headers .

- You can do this SQL way as well. val df = spark. sql("SELECT * FROM csv.` hdfs:///csv/file/dir/file.csv`") Dependencies: "org.apache.spark" % "spark-core_2.11" % 2.0.

Regarding this, what is are the benefit's of using appropriate file formats in spark?

Spark provides the access and ease of storing the data, it can be run on many file systems. For example, HDFS, Hbase, MongoDB, Cassandra and can store the data in its local files system. Spark supports all the file formats supported by Hadoop. There are many benefits of using appropriate file formats.

Does spark support Orc?

Spark's ORC support leverages recent improvements to the data source API included in Spark 1.4 (SPARK-5180). As ORC is one of the primary file formats supported in Apache Hive, users of Spark's SQL and DataFrame APIs will now have fast access to ORC data contained in Hive tables.

Related Question AnswersWhat is storage format?

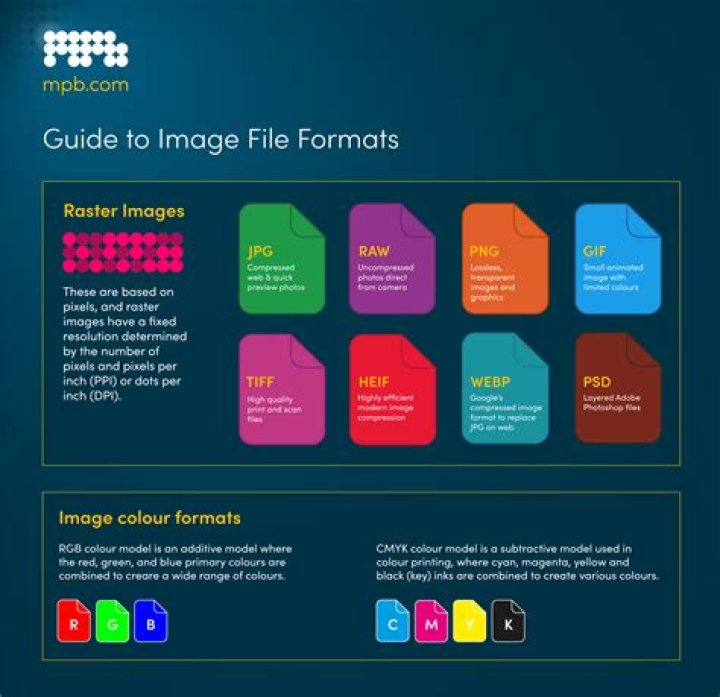

A file format is a standard way that information is encoded for storage in a computer file. It specifies how bits are used to encode information in a digital storage medium. Some file formats are designed for very particular types of data: PNG files, for example, store bitmapped images using lossless data compression.Does spark store data?

Spark is not a database so it cannot "store data". It processes data and stores it temporarily in memory, but that's not presistent storage. Spark can access data that's in: SQL Databases (Anything that can be connected using JDBC driver)Where is Spark data stored?

Spark is not a database so it cannot "store data". It processes data and stores it temporarily in memory, but that's not presistent storage. In real life use-case you usually have database, or data repository frome where you access data from spark.What is HDFS file format?

A file format is just a way to define how information is stored in HDFS file system. These issues are exacerbated with the difficulties managing large datasets, such as evolving schemas, or storage constraints. The various Hadoop file formats have evolved as a way to ease these issues across a number of use cases.Is spark DataFrame in memory?

Spark DataFrame Features Custom Memory Management: Data is stored off-heap in a binary format that saves memory and removes garbage collection. Optimized Execution Plans: Spark catalyst optimizer executes query plans, and it executes the queries on RDDs.What is in memory in spark?

In Apache Spark, In-memory computation defines as instead of storing data in some slow disk drives the data is kept in random access memory(RAM). Also, that data is processed in parallel. By using in-memory processing, we can detect a pattern, analyze large data.Which of the following file formats are supported by Spark?

With Apache Spark release 2.0, the following file formats are supported out of the box:- TextFiles (already covered)

- JSON files.

- CSV Files.

- Sequence Files.

- Object Files.

What is Spark used for?

Apache Spark is open source, general-purpose distributed computing engine used for processing and analyzing a large amount of data. Just like Hadoop MapReduce, it also works with the system to distribute data across the cluster and process the data in parallel.Is GZ Splittable?

Snappy and GZip blocks are not splittable, but files with Snappy blocks inside a container file format such as SequenceFile or Avro can be split.Is Snappy a splittable?

According to this Cloudera post, Snappy IS splittable. For MapReduce, if you need your compressed data to be splittable, BZip2, LZO, and Snappy formats are splittable, but GZip is not. But from the hadoop definitive guide, Snappy is NOT splittable.Which is the method to create RDD in spark?

RDDs are generally created by parallelized collection i.e. by taking an existing collection in the program and passing it to SparkContext's parallelize() method. This method is used in the initial stage of learning Spark since it quickly creates our own RDDs in Spark shell and performs operations on them.What types of data can spark handle?

Apache Spark is an open source big data processing framework that enables large-scale analysis through clustered machines. Coded in Scala, Spark makes it possible to process data from data sources such as Hadoop Distributed File System, NoSQL databases, or relational data stores like Apache Hive.What is client mode in spark?

Spark Client Mode The behavior of the spark job depends on the “driver” component and here, the”driver” component of spark job will run on the machine from which job is submitted. Hence, this spark mode is basically called “client mode”. When a job submitting machine is within or near to “spark infrastructure”.How can you create an RDD for a text file?

To create text file RDD, we can use SparkContext's textFile method. It takes URL of the file and read it as a collection of line. URL can be a local path on the machine or a hdfs://, s3n://, etc. The point to jot down is that the path of the local file system and worker node should be the same.What is ORC and parquet?

ORC is a row columnar data format highly optimized for reading, writing, and processing data in Hive and it was created by Hortonworks in 2013 as part of the Stinger initiative to speed up Hive. Parquet files consist of row groups, header, and footer, and in each row group data in the same columns are stored together.Do you need to install spark on all nodes of the yarn cluster while running spark on yarn?

No, it is not necessary to install Spark on all the 3 nodes. Since spark runs on top of Yarn, it utilizes yarn for the execution of its commands over the cluster's nodes. So, you just have to install Spark on one node.What is sequence file format in hive?

Hive Sequence File Format Sequence files are Hadoop flat files which stores values in binary key-value pairs. The sequence files are in binary format and these files are able to split. The main advantages of using sequence file is to merge two or more files into one file.How do I convert a spark Dataframe to a csv file?

4 Answers- You can convert your Dataframe into an RDD : def convertToReadableString(r : Row) = ??? df. rdd.

- With Spark <2, you can use databricks spark-csv library: Spark 1.4+: df.

- With Spark 2.

- You can convert to local Pandas data frame and use to_csv method (PySpark only).

How do I create a CSV file in Spark?

1 Answer- dataframe.

- .repartition(1)

- .write.

- .mode ("overwrite")

- .format("com.intelli.spark.csv")

- .option("header", "true")

- .save("filename.csv")